Motion is Identity: The Future of Style, AI, and ChatGPT Viral Photo Editing

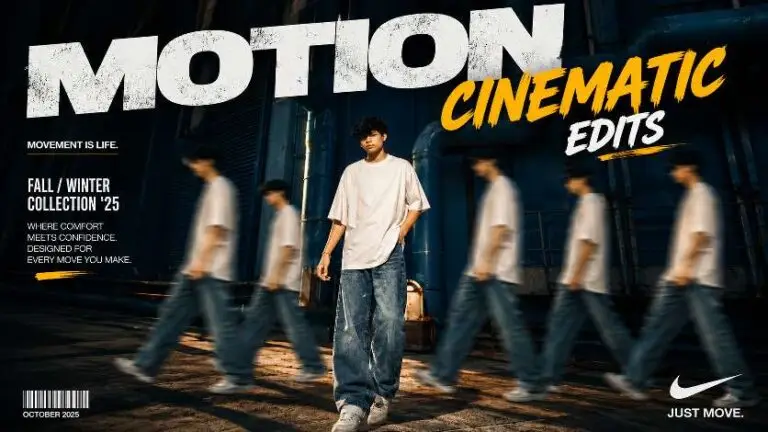

In 2026 feeds, style is not only a color grade—it is how fabric moves across two frames, how hair catches rim light when the head turns, and how a pose reads mid-transition. AI can invent a beautiful still, but carousel and reel workflows punish inconsistency unless you brief motion the same way you brief lighting.

Identity as a sequence, not a snapshot

Think in triptychs: frame A sets posture, frame B carries motion blur or wardrobe shift, frame C lands the emotional beat. ChatGPT can generate these as a table so your editor or template app receives explicit continuity notes—hair part direction, jacket lapel state, shadow edge position—rather than vague “same vibe.”

Prompt language models understand for motion

Use verbs tied to physics: drape, bounce, drag, settle, oscillate. Pair each verb with magnitude (“subtle 2–3% strap slip across shoulder”). Avoid “dynamic energy” unless you translate it into shutter speed language, for example “1/120s sports crispness” versus “1/30s lyrical blur.”

Copy-ready continuity brief

Why this matters for brand work

Brands now reuse creator lanes across paid and organic. If your AI pipeline cannot repeat wardrobe seams and accessory placement, you spend more time fixing than creating. Motion-first prompting reduces rework because it forces decisions early.